For years, Relativity Assisted Review has amplified review teams’ efforts, saving millions of dollars in the process. It’s been a crucial component of our customers’ workflows, and they’ve always shared their ideas for how we can make it even more valuable. With this month’s RelativityOne update, we’re delivering on their input with something big: active learning.

Active learning puts the most relevant documents in your reviewers’ hands—fast. It does this by continually learning, in real time, from your team’s coding decisions and using those decisions to deliver the documents that matter most. It’s the latest addition to the Assisted Review workflow and will revolutionize how you run your review. Here’s how.

1. You’ll get to the good stuff faster.

Active learning keeps a pulse on coding decisions in real time to refine its understanding of what’s responsive. As your project progresses and reviewers code more documents, the engine gets smarter, analyzing the coding decisions and constantly refining its understanding of what’s most important to your matter—so you can get to the heart of the issue faster.

2. You’ll spend less time on setup and administration.

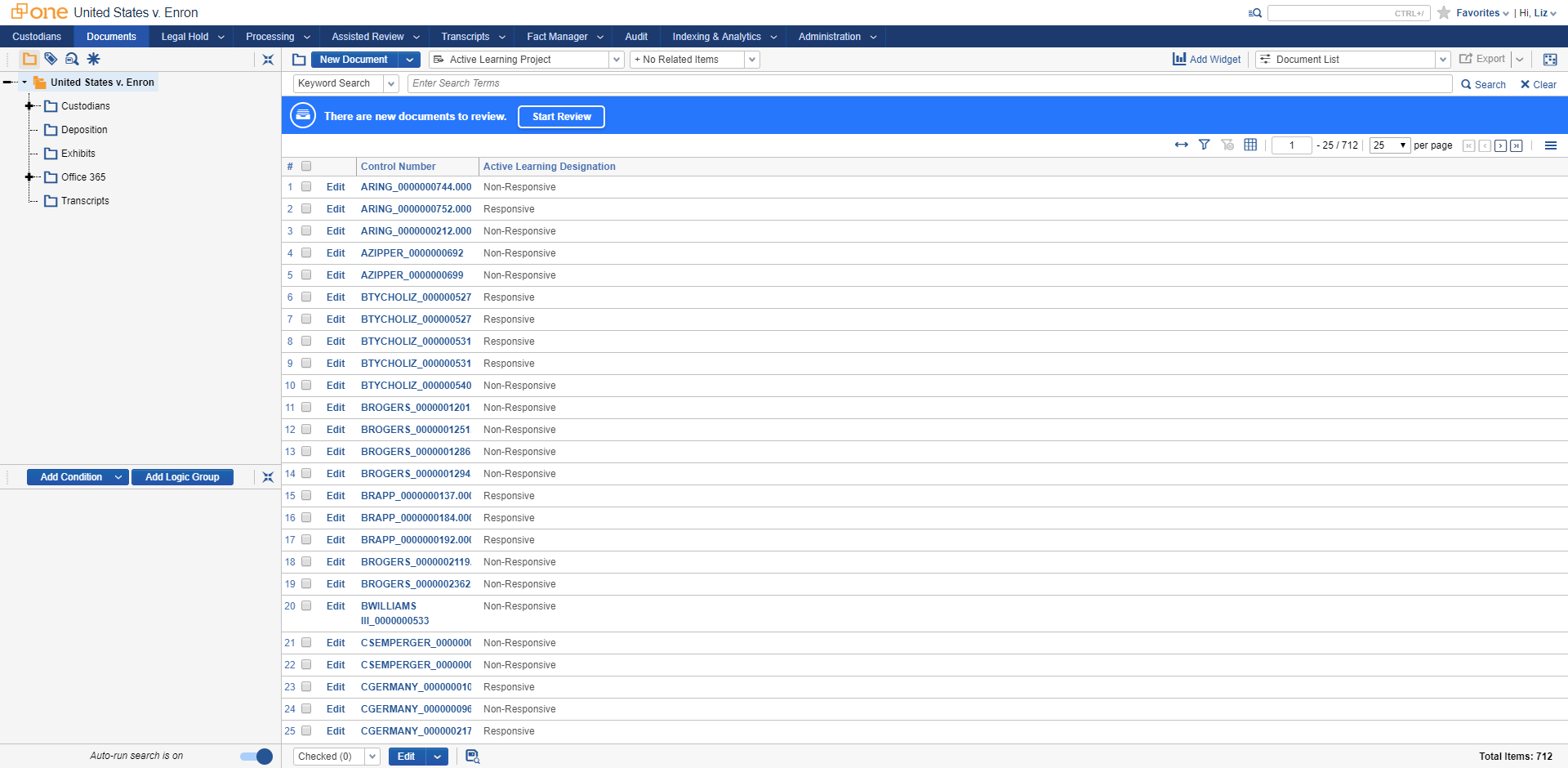

Getting to the most important documents doesn’t have to take a lot of effort. Active learning handles the brunt of the work, with minimal setup and human input. You can take a 100,000-document project from setup to review in under 10 minutes. There’s no need for training sets, no manually batching documents. Reviewers simply log in, click a button, and start reviewing the most relevant data. And because the review queue of documents is continuous, administrators don’t have to worry about any next steps, and they can easily monitor the results.

3. You can flex your analytics muscles to meet the needs of any review project.

Active learning is the new kid on a block full of unstructured and structured analytics tools already available in RelativityOne. Combine email threading, clustering, sample-based learning, and visualizations with active learning to create unique workflows that match the needs of your project—whether it’s investigating the merits of a claim, sorting your data into key issues, or preparing evidence for litigation.

For example, you can use cluster visualization to narrow in on relevant documents based on search terms and key players you’ve determined as important, then use those documents to kickstart active learning. Right off the bat, the active learning engine will have a solid understanding of what’s relevant—based on your coding of relevant documents—and deliver more of those types of documents to your team.

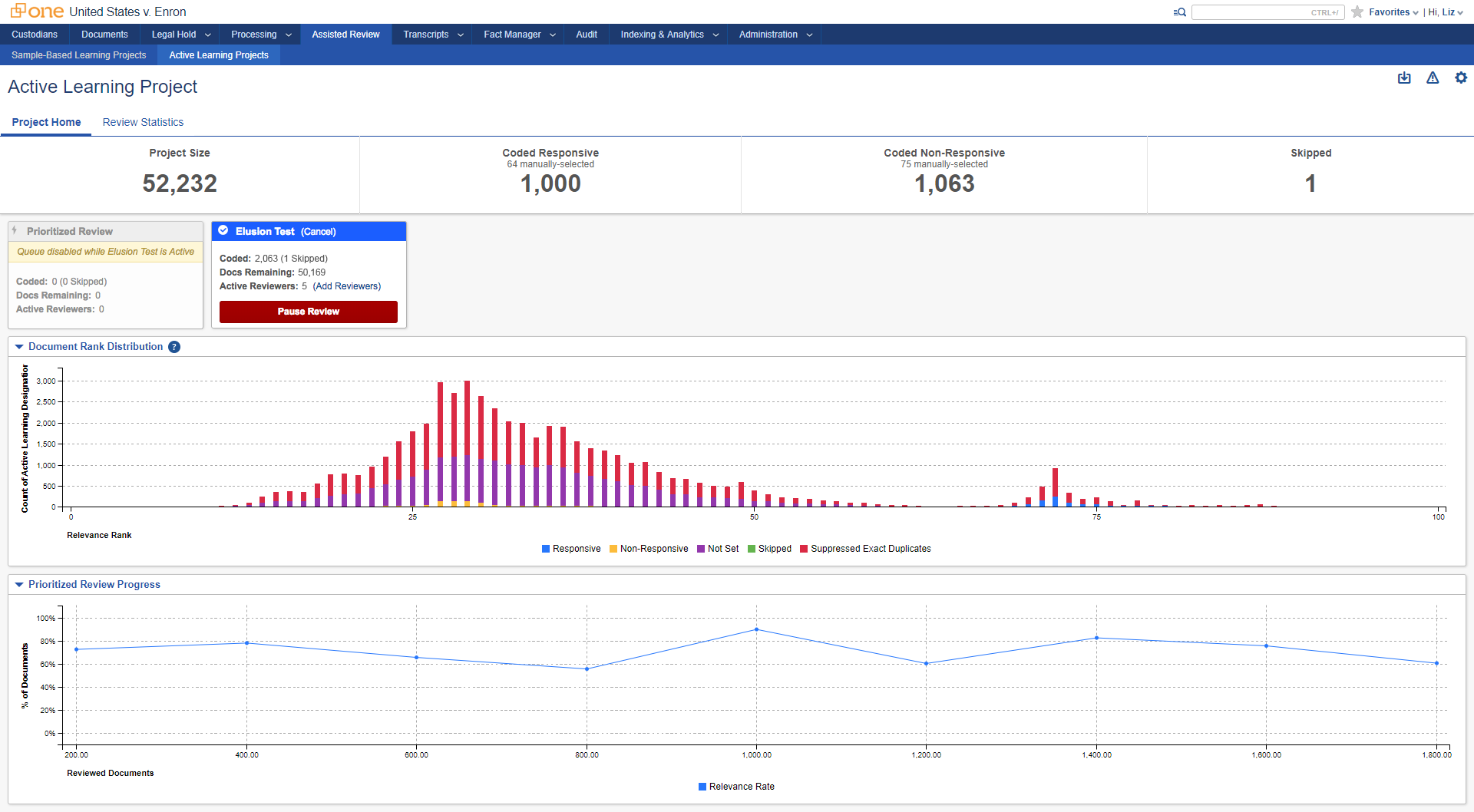

4. You’ll be able to call a project “done” with confidence.

Your review is winding down, but when is it safe to call it? Elusion testing can help with that. It’s a new validation test you run at the end of the project to see which relevant documents were missed. To help you determine when it’s time to run the elusion test, take a peek at the review progress report, which records the percentage of documents coded responsive from the queue. As your review progresses, you’ll see fewer responsive documents for RelativityOne to serve up. Once you stop seeing a high portion of responsive documents on the graph, it’s time to run the elusion test.

Active learning is a sought-after addition to Assisted Review, and we can’t wait to see how customers implement it in the field. However, it’s not the only enhancement to RelativityOne this month. From improvements to error resolution in Processing to more foreign language support in email threading (including Japanese and Portuguese), RelativityOne is making e-discovery work easier.