It’s a simple enough word with just four little syllables. Yet, there’s something about analytics in e-discovery that tends to spook people who have never used it, despite the time and money it can save.

“When people hear the word ‘analytics,’ they get intimidated because it’s perceived as being such a technical step,” says Sharri Wilner, litigation technology manager at Reed Smith LLP. “But if you break it down into its components and understand how it’s actually designed to aid you as a tool, you’re going to embrace it.”

So let’s do it. Let’s break down analytics, starting with three little features that pay off big and just so happen to share one thing in common: they require absolutely zero user input, making them the perfect starting point for even the most novice of the analytics novices.

Clustering: The “I Won’t Do an Investigation Without It” Tool

What Is It?

Clustering does exactly what it sounds like it does—it automatically groups conceptually related documents together, labeling each cluster with its most prevalent concepts so you can easily see what they’re all about. Long story short: clustering helps you organize your data.

“You don’t have to look at the data piece by piece to understand what you may or may not have,” says Angela Green, deputy director of the Mega Litigation Support Team at Leidos. “Clustering will give you a starting point, showing you things you probably already knew about the case, things you had no idea to ask about, and then some junk you can weed out.”

Think of clustering as a map to your data set—it helps you find conceptually relevant information and avoid stuff you just don’t need. Sometimes, it may even uncover hidden treasures.

“We once had a case where [the clusters] referenced meals, and it was weird to have so many records referencing meal types,” Angela recalls. “But it turned out to be critical because ‘meals’ was a code word. We wouldn’t have found that with keyword searching.”

Pro Tip: When to Use Clustering

Though you can use clustering on any project, it’s especially helpful in the beginning stages when you’re working with unfamiliar data.

Let’s say you’re conducting early case assessment or an investigation. Analytics will cluster your documents together—without any input from you or your team—allowing you to quickly begin organizing your data. For instance, you might tag certain clusters as “include” and others as “exclude” based on the type of content contained within them. Clusters labeled “basketball,” “office pool,” or “March Madness,” for example, might land in the exclude pile, as they’re likely not important to your project.

“I won’t do an investigation without clustering first,” says Sharri. “It helps us get to the root of the problem more quickly.”

Email Threading: The “If You Do Nothing Else, Do This” Tool

What Is It?

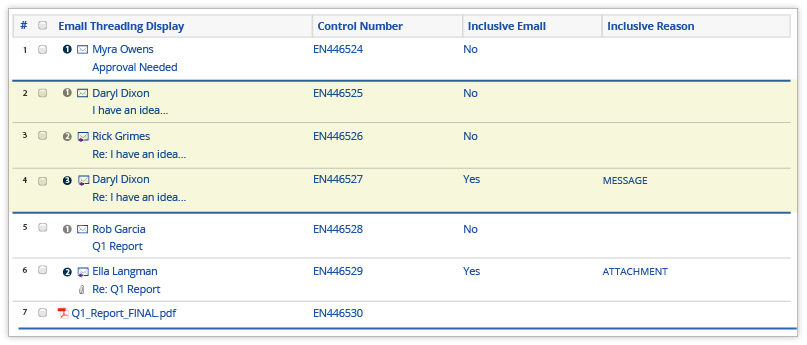

The concept of email threading is pretty simple and something you can find in your own inbox. Basically, analytics collects all the emails in a single conversation—replies, reply-alls, and forwards—and organizes them for a faster review. Rather than reviewing bits and pieces of a conversation separately (or multiple times), you get it all in one neatly bundled package.

{{cta('85dff521-debc-4060-b847-8f882f888374')}}

“Email threading is basic analytics,” says Sharri. “It can significantly cut down the amount of time you spend touching your documents.”

Once analytics threads your emails, you can batch out the inclusive emails—those that include content from all emails in a chain—directly to your review team to ensure each person reviews entire conversations in one fell swoop. You can also examine how the emails are visually grouped together to better understand custodian communications.

“Run email threading,” advises Angela. “If nothing else, it organizes your data so you get the entire story at one time. You don’t want three different reviewers reviewing different portions of the same email and making different coding decisions on it.”

Pro Tip: Getting Email Threading Skeptics on Board

If you’re struggling to convince your team—or yourself—to give email threading a try, listen to that ubiquitous slogan (or Shia LaBeaouf) and “Just do it.” Try it out to see how the threads look and feel on real data. According to Sharri, you won’t look back.

“[Skeptics] have to see the difference reviewing documents in email threads makes,” says Sharri. “Once they see that, it will click with them, and they won’t want to go back. Being able to visually understand how the threads work will make their learning curve shorter and their review time faster.”

Near-duplicate Identification: The “Use This for Any QC Work” Tool

What Is It?

With near-duplicate identification, analytics automatically finds and groups documents that are nearly identical, even taking into account word order and location. Often you’ll see near-duplicate identification grouping multiple versions of the same document, such as different drafts or addendums to contracts.

But what’s really great about near-duplicate identification is that when new documents are introduced into your data set, analytics will incorporate those newbies into existing near-duplicate groups (where applicable) and set apart any new information. Additionally, as you’re going through review, you can use a compare feature to highlight the differences and similarities between two documents.

Translation: near-duplicate identification saves you from rereading content.

Additionally, once analytics runs near-duplicate identification, it will give you high-level details about the results, such as:

- Which document is the largest document in the duplicate group (aka the principal document)

Note: The principal document acts as the anchor document to which all other documents in the near-duplicate group are compared. You can adjust how similar a document must be to the principal document for analytics to sort it into the principal’s group (for example, setting the minimum similarity percentage at 100 percent would require a document to be an exact match).

- How similar each document in the group is to the principal document (based on a percentage)

- The average similarity percentage among documents in a near-duplicate group

- The total number and average number of documents in each group

Pro Tip: When to Use Near-duplicate Identification

“You need to look at near-duplicates for any quality control work—especially on privilege review,” says Angela. “Near-duplicate identification looks at the actual text, while clustering looks at ideas. Privilege is very subjective, which makes near-duplicate identification the most efficient and effective analytics tool for QC.”

If you’re using Relativity, you can set up your QC review to display coding decisions on near-duplicate groupings. From there, you’ll be able to see your privilege coding and responsiveness coding, which can help identify inconsistencies.

For example, if there are any near-duplicate groups that contain both privileged and not privileged coding, you’ll need to investigate further. It’s possible one of those documents contains an attachment that affects the privilege call—or it’s possible your team’s coding is a bit off.

When Should You Not Use Analytics?

The short answer: never.

“There’s never a reason not to use analytics,” says Angela. “Tiny cases that only have 2,000 documents or less? I’m using analytics. And cases that have 12 million records or more? I’m still using analytics.”

Like most things in life, the first step is often the hardest to take. But there are tools out there to help you dive in and see exactly what analytics can do. Start with e-books, webinars, documentation, or even an industry conference like Relativity Fest.

“Once I show clients what their data looks like after it’s organized by [analytics], they get it,” says Angela.